Page 84 - AIH-2-2

P. 84

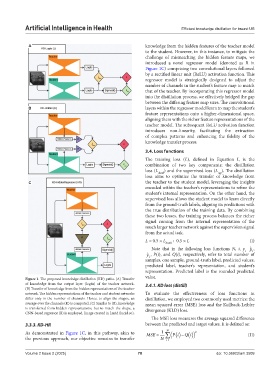

Artificial Intelligence in Health Efficient knowledge distillation for breast US

A knowledge from the hidden features of the teacher model

to the student. However, in this instance, to mitigate the

challenge of mismatching the hidden feature maps, we

introduced a novel regressor model (denoted as R in

Figure 1C) comprising two convolutional layers followed

by a rectified linear unit (ReLU) activation function. This

regressor model is strategically designed to adjust the

number of channels in the student’s feature map to match

that of the teacher. By incorporating this regressor model

into the distillation process, we effectively bridged the gap

between the differing feature map sizes. The convolutional

B layers within the regressor model learn to map the student’s

feature representations onto a higher-dimensional space,

aligning them with the richer feature representations of the

teacher model. The subsequent ReLU activation function

introduces non-linearity, facilitating the extraction

of complex patterns and enhancing the fidelity of the

knowledge transfer process.

3.4. Loss functions

The training loss (L), defined in Equation I, is the

combination of two key components: the distillation

loss (L distill ) and the supervised loss (L ). The distillation

sup

loss aims to optimize the transfer of knowledge from

C the teacher to the student model, leveraging the insights

encoded within the teacher’s representations to refine the

student’s internal representation. On the other hand, the

supervised loss allows the student model to learn directly

from the ground-truth labels, aligning its predictions with

the true distribution of the training data. By combining

these two losses, the training process balances the richer

signal coming from the internal representation of the

much larger teacher network against the supervision signal

from the actual task.

L = 0.5 × L distill + 0.5 × L (I)

Note that in the following loss functions N, i, y ˆ p ,

i,

i

ˆ y , P(i), and Q(i), respectively, refer to total number of

i

samples, one sample, ground-truth label, predicted values,

predicted label, teacher’s representation, and student’s

representation. Predicted label is the rounded predicted

Figure 1. The proposed knowledge distillation (KD) paths. (A) Transfer value.

of knowledge from the output layer (logits) of the teacher network. 3.4.1. KD loss (distill)

(B) Transfer of knowledge from the hidden representations of the teacher

network. The hidden representations of the teacher and student networks To evaluate the effectiveness of loss functions in

differ only in the number of channels. Hence, to align the shapes, an distillation, we employed two commonly used metrics: the

average over the channels (K) is computed. (C) Similar to (B), knowledge mean squared error (MSE) loss and the Kullback-Leibler

is transferred from hidden representations, but to match the shape, a divergence (KLD) loss.

CNN-based regressor (R) is employed. Image created in Lucid (lucid.co).

The MSE loss measures the average squared difference

3.3.3. KD-HR between the predicted and target values. It is defined as:

As demonstrated in Figure 1C, in this pathway, akin to MSE 1 N Pi 2 (II)

Qi

the previous approach, our objective remains to transfer N i1

Volume 2 Issue 2 (2025) 78 doi: 10.36922/aih.3509