Page 97 - AN-4-4

P. 97

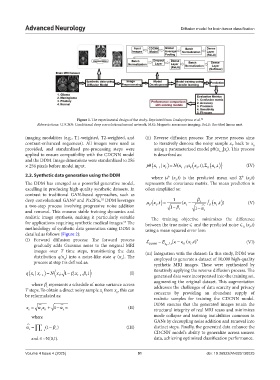

Advanced Neurology Diffusion model for brain tumor classification

Figure 1. The experimental design of the study. Reprinted from Onakpojeruo et al. 52

Abbreviations: CDCNN: Conditional deep convolutional neural network; MRI: Magnetic resonance imaging; ReLU: Rectified linear unit.

imaging modalities (e.g., T1-weighted, T2-weighted, and (ii) Reverse diffusion process: The reverse process aims

contrast-enhanced sequences). All images were used as to iteratively denoise the noisy sample x back to x

0

T

provided, and standardized pre-processing steps were using a parameterized model pθ(x |x ). This process

t−1

t

applied to ensure compatibility with the CDCNN model is described as:

and the DDM. Image dimensions were standardized to 256

t

t ,

× 256 pixels before model input. p x t 1 | x N x( t 1 ; x t, ), x t (IV)

t

2.2. Synthetic data generation using the DDM where μ (x ,t) is the predicted mean and Σ (x ,t)

θ

θ

t

t

The DDM has emerged as a powerful generative model, represents the covariance matrix. The mean prediction is

excelling in producing high-quality synthetic datasets. In often simplified as:

contrast to traditional GAN-based approaches, such as

deep convolutional GANs and Pix2Pix, DDM leverages xt, 1 x ( t x t,) (V)

8

52

a two-step process involving progressive noise addition t 1 t 1 t

and removal. This ensures stable training dynamics and t t

realistic image synthesis, making it particularly suitable The training objective minimizes the difference

for applications requiring synthetic medical images. The between the true noise ∈ and the predicted noise ∈ (x ,t)

55

methodology of synthetic data generation using DDM is using a mean squared error loss: θ t

detailed as follows (Figure 2):

(i) Forward diffusion process: The forward process E [ ( x t,) 2 (VI)

gradually adds Gaussian noise to the original MRI DDPM x 0 ,, t t

images over T time steps, transitioning the data (iii) Integration with the dataset: In this study, DDM was

distribution q(x ) into a noise-like state q (x ). The employed to generate a dataset of 10,000 high-quality

0

T

process at step t is defined as: synthetic MRI images. These were synthesized by

t

t

qx x| t N x ; 1 t x , 1 t (I) iteratively applying the reverse diffusion process. The

generated data were incorporated into the training set,

1

t 1

augmenting the original dataset. This augmentation

where b represents a schedule of noise variance across

t

T steps. To obtain a direct noisy sample x from x , this can addresses the challenges of data scarcity and privacy

concerns by providing an abundant supply of

t

0

be reformulated as:

realistic samples for training the CDCNN model.

x t x 1 t (II) DDM ensures that the generated images retain the

structural integrity of real MRI scans and minimizes

0

t

where mode collapse and training instabilities common in

GANs by decoupling noise addition and removal into

t 1 ( ) (III) distinct steps. Finally, the generated data enhance the

t 1 s

s

CDCNN model’s ability to generalize across unseen

and ∈~N(0,I). data, achieving optimized classification performance.

Volume 4 Issue 4 (2025) 91 doi: 10.36922/AN025130025